I decided to write a post about how to use the PipeWire camera support in Firefox, how to enable it, how to check if it’s working and how to debug possible problems.

Prerequsities

To use PipeWire camera support, you need Firefox 116 or newer. However, the latest Firefox 122 includes major PipeWire camera changes, making it actually usable (you can read about it in my previous blog post).The PipeWire camera is not enabled by default. To enable it, go to “about:config” in your address bar, find the “media.webrtc.camera.allow-pipewire” option, and enable it. Afterward, restart Firefox.

Using PipeWire camera in Firefox

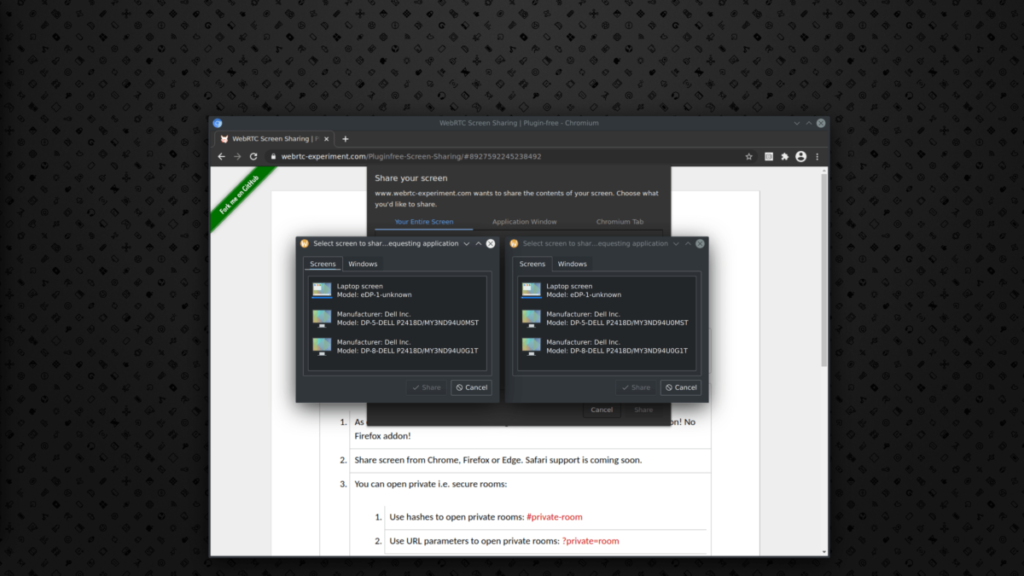

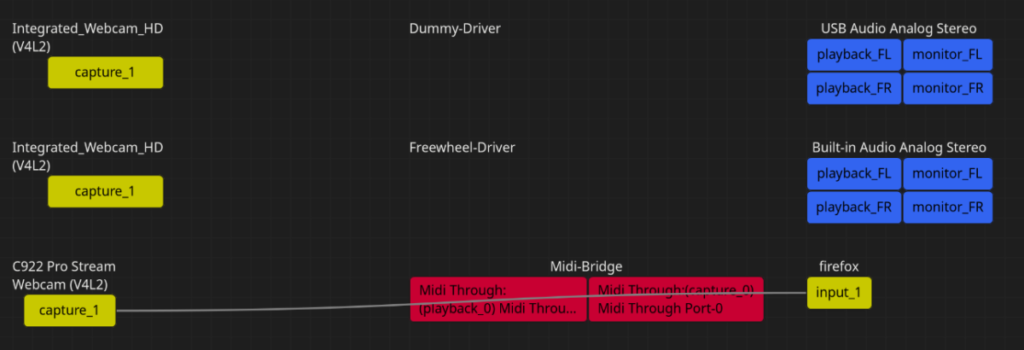

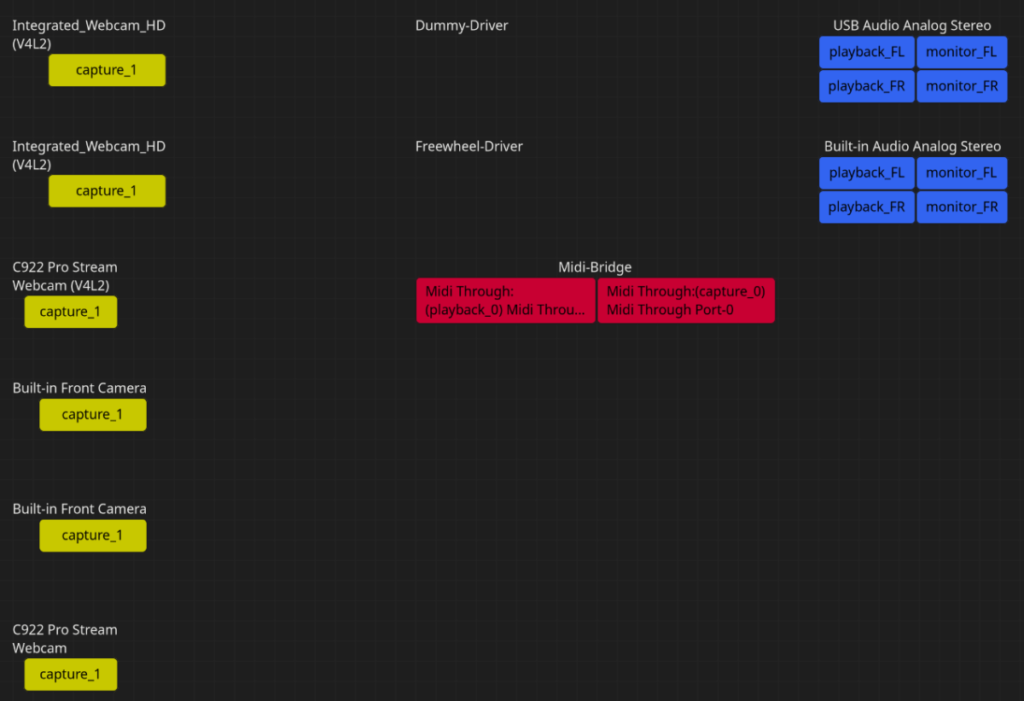

When using the PipeWire camera, you should not notice any difference compared to using V4L2. The only change is that you will receive an additional system dialog from xdg-desktop-portal asking for camera access, which is a one-time occurrence. Your cameras will have ‘(V4L2)’ in their name if no libcamera is involved (more on that later). This indicates that they are using V4L2 through PipeWire. To check if PipeWire is being used, you can also use an application like Helvum to check if your camera is detected and used through PipeWire. Below, you can see what Helvum graphs look like when using my camera with PipeWire:

To verify that your camera is detected by PipeWire, you can use Helvum alone, without Firefox. This way, your camera on the left side will just not be connected to any client on the right side.

PipeWire camera is not working

1) I don’t get any system dialog or Firefox says access to the camera has been rejected

If your camera is visible in Helvum, then PipeWire can detect it. First, verify that you have not previously denied access to the camera or accidentally clicked “do not allow” when prompted. To do this, reset the permissions by running “flatpak permission-remove devices camera” to ensure you will get asked again. In case you still don’t get prompted, make sure xdg-desktop-portal is installed and running, as well as either xdg-desktop-portal-gnome or xdg-desktop-portal-kde. Note that for example xdg-desktop-portal-wlr doesn’t support camera portal, which is essential for this to work. You can also restart xdg-desktop-portal with “G_MESSAGES_DEBUG=ALL” env variable set to further debug issues with xdg-desktop-portal.

2) Camera is not working in general or once selected and allowed to be used

In this case best thing you can do is to open a bug, while providing information about your camera hardware and including output from Firefox you run with PIPEWIRE_DEBUG=5 MOZ_LOG="MediaManager:5,CamerasParent:5,CamerasChild:5,VideoEngine:5". Since it might be a missing format we (WebRTC) don’t support yet or issue in V4L2 integration or you having some modern camera that doesn’t work yet very well on Linux (e.g. Intel MIPI camera).

3) Google Meet doesn’t work

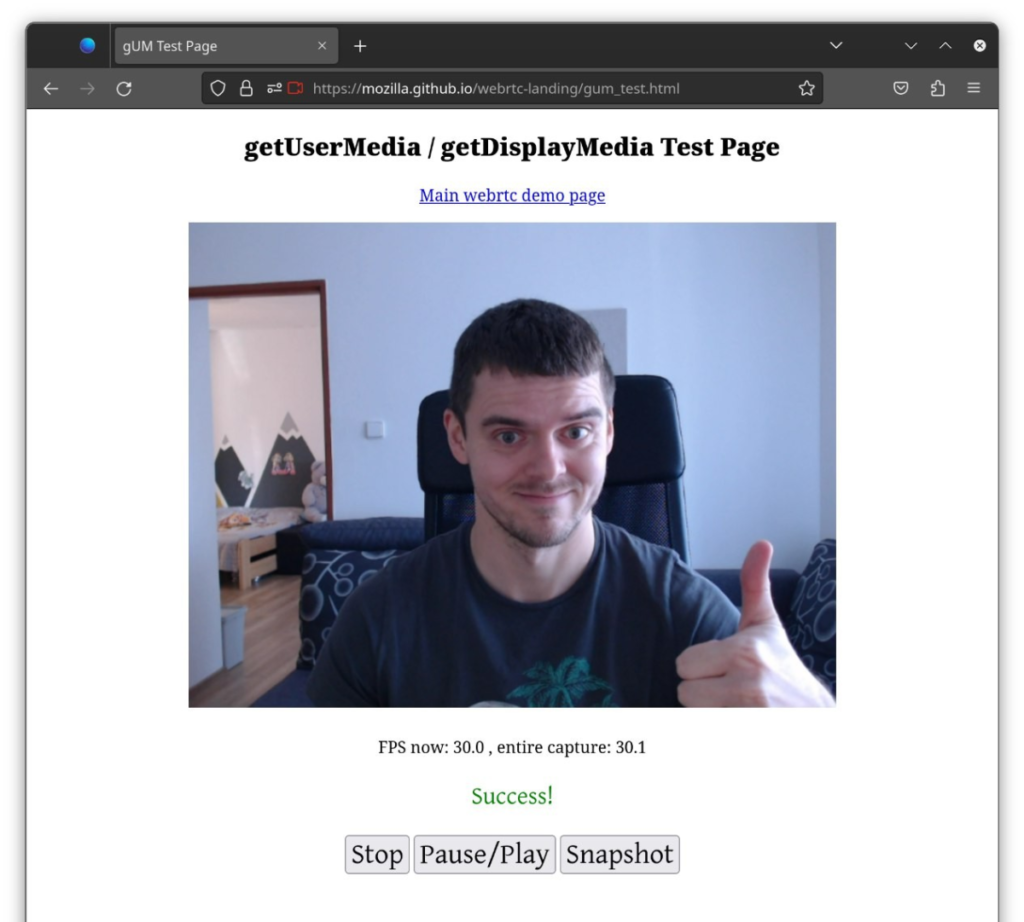

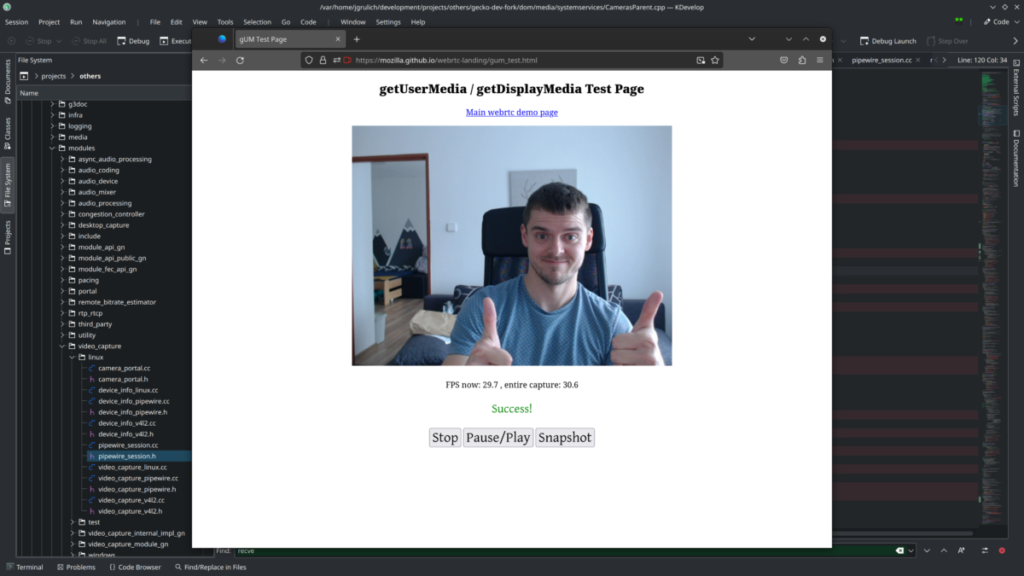

This is a known issue and actually a problem in Google Meet that stopped working after some recent update. There is an upstream ticket in Firefox to track this problem, but sadly hasn’t been resolved yet. To workaround this issue, visit any testing camera page (e.g. this one) first, and then use Google Meet.

4) Firefox crashes when sharing my camera with two websites

Also a known issue. Here is an upstream WebRTC ticket and I have a change already submitted for review. This change will be backported to Firefox as soon as it’s merged in WebRTC upstream.

5) Any other issue

Please, let me know with any other issue you have. Best thing you can do is to open a bug report to Firefox, where you pick “WebRTC: Audio/Video” as a component.

Using libcamera

For some modern cameras, this may be the only way to make them work in Firefox. This is not a comprehensive guide on how to use it, but it is what made it work in my setup on Fedora.

1) Install libcamera and libcamera plugin for PipeWire. On Fedora you can run:sudo dnf install libcamera pipewire-plugin-libcamera

2) Restart PipeWire and Wireplumber (PipeWire session manager) by running:systemctl --user stop wireplumbersystemctl --user stop pipewiresystemctl --user start wireplumber

You should see something like:

led 30 15:30:43 fedora wireplumber[91582]: [2:36:44.619446407] [91582] INFO Camera camera_manager.cpp:284 libcamera v0.2.0

Compared to following output while not having the plugin installed:

led 30 12:54:29 fedora wireplumber[1925]: SPA handle 'api.libcamera.enum.manager' could not be loaded; is it installed?

led 30 12:54:29 fedora wireplumber[1925]: PipeWire's libcamera SPA missing or broken. libcamera not supported.

3) Check your camera in Helvum

In the screenshot above, my cameras are presented twice: once through the V4L2 integration in PipeWire and again thanks to libcamera. Unfortunately, I don’t know yet how to disable the V4L2 integration when using libcamera so that your cameras are not shown twice.

Anything else?

Currently, I am working on implementing a fallback mechanism to use V4L2 if PipeWire fails in certain scenarios (upstream bug | upstream change) to ensure that users still have a functioning camera in the case of a broken PipeWire/portal setup. However, I expect that many issues will be discovered as people begin to test this feature, for which I am very grateful.