New year, new challenges.

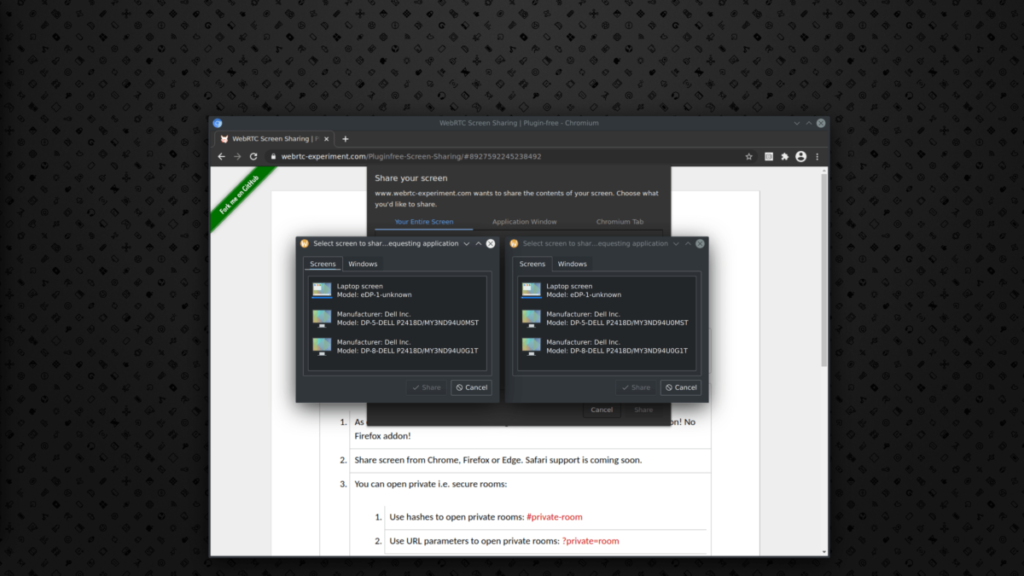

We finally reached a major milestone with Chromium 110, which was a release where we finally got screen sharing enabled by default on Wayland, and since then you no longer have to go into the preferences and enable the flag you need. That doesn’t mean my work there is over, but I’ve shifted my focus to something related but slightly different and that is PipeWire camera support.

Work on PipeWire camera support started in 2021 and was done by Michael Olbrich (Pengutronix). He submitted a huge change to Chromium to add this support and had trouble finding a reviewer because there was actually no one who knew anything about PipeWire in the Chromium project. I actually saw his change request by accident, but we got in touch and decided to move this to WebRTC instead, because having it lower in the stack means we would get it automatically in other browsers, like Firefox. Michael attended a meeting we used to have regularly for screen sharing support in WebRTC and we discussed how to implement PipeWire camera support in WebRTC instead and how to reuse some of the code we already had for screen sharing to avoid code duplication. After a few submitted and reverted reviews (usually when things break Chromium parts that are not covered by CI, happened to me many times), we ended up with PipeWire camera support in WebRTC (talking about the beginning of this year).

Journey to PipeWire camera support in Firefox

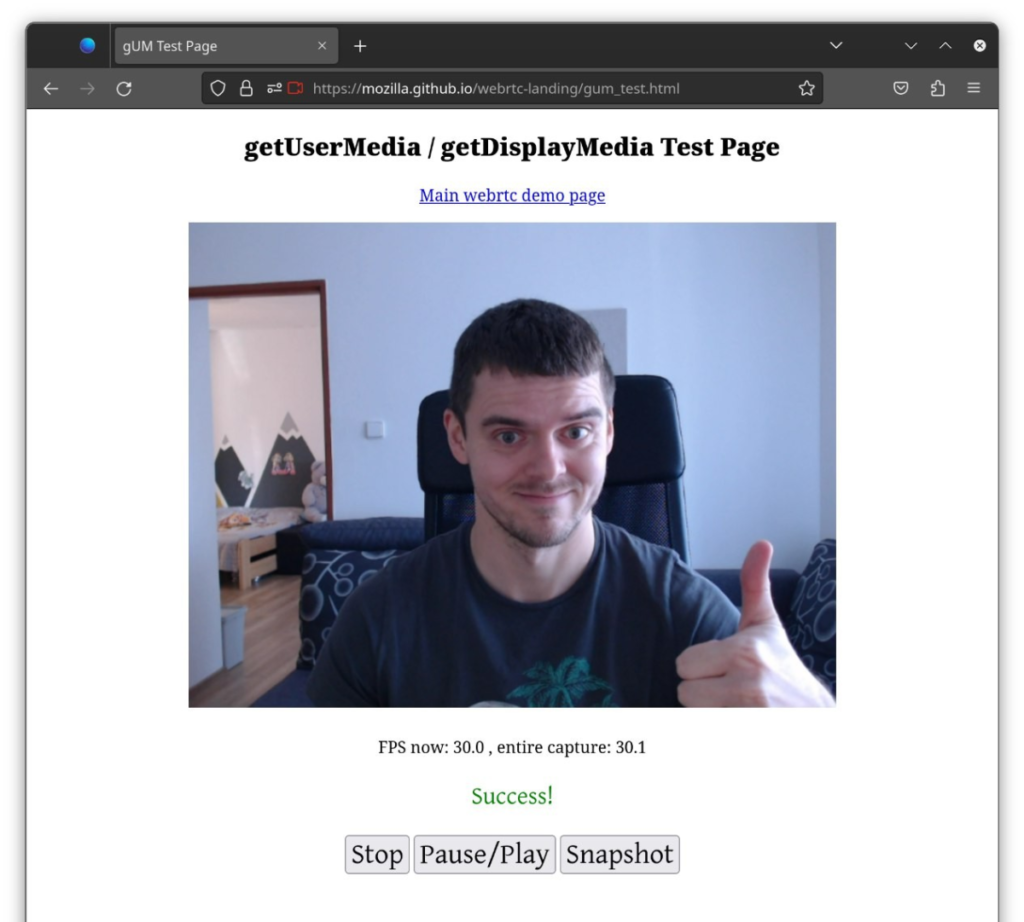

Up to recently, my work has mostly been 95% WebRTC and 5% Chromium, but I have not been familiar with Firefox at all (not counting WebRTC backports). I actually started fixing screen sharing support in there first before moving to camera, because I noticed a few issues after Firefox (finally) did a WebRTC rebase to some of the newer versions. They’ve actually started doing monthly WebRTC rebases, which is really a good thing and I’m glad to see that happening. Anyway, even though Firefox has more recent WebRTC these days, when I started in February, there was still no PipeWire camera support at all because WebRTC was still a few months behind, so I had to backport all the patches and make them work with Firefox. Only then I could finally start working on the actual PipeWire camera support from WebRTC. Working on the backports, I was still working in the WebRTC space, so everything was somewhat familiar. Implementing the actual PipeWire support was a different story and took me some extra time to understand how everything works. This includes camera API on the WebRTC side, camera support on the Firefox side, and I also had to learn all the APIs specifically used in Firefox, but admittedly, learning about new things is fun too. After some tries and errors it started to work and I was able to share my camera using the PipeWire camera backend from WebRTC. You have to trust me that the picture below is not using the V4L2 backend.

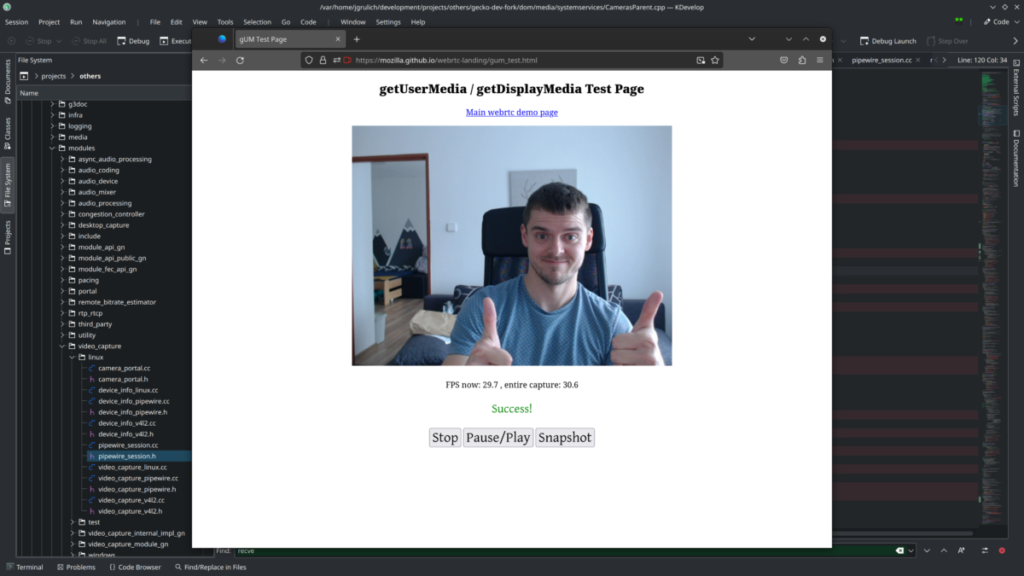

I went ahead and submitted my WebRTC backports and the PipeWire camera backend implementation for review to Firefox. Unfortunately, I was told that the code where I placed my implementation could also be used by the WebRTC Javascript API, which is used by bots to check for camera presence on the client side, which I didn’t know as someone who just recently started working on camera support. This was a problem because we get PipeWire access through xdg-desktop-portal and this involves showing a dialog to the user asking for camera access. Showing a camera request dialog randomly to the user would not be a good experience. Going back to the drawing board, I talked to Andreas Pehrson (Mozilla/WebRTC). Andreas was a great helper and we came up with a solution on how to implement it properly in Firefox and avoid things like I’ve mentioned before. This time it involved some re-org changes in WebRTC, where I split the xdg-desktop-portal and PipeWire implementations for PipeWire video capture, so we can request camera access in Firefox only when appropriate and only do the PipeWire stuff in the backend assuming the access was granted. So I did implement it again, this time according to what we agreed on with Andreas and it worked.

This is now submitted for review again and hopefully this time it will only need some minor fixes and not a complete rewrite like before, and you will be able to try/use it sooner rather than later. The main change is submitted here, but it is accompanied by other changes with WebRTC backports or changes that make the backports buildable with Firefox. With the first version of the change, I had a Fedora COPR repository, but I had to discontinue it, because it was too hard to maintain it in a buildable state on top of a stable Firefox. But you can be sure that Fedora will be the very first consumer of these changes once they are merged.

Why do we need this?

For many reasons. I would recommend you to read a blog post from Christian Schaller, where everything is explained into the details and gives you more information about the camera stack. Main reasons are:

- Security

- Access to the camera must be granted by the user, so you can be sure that no one is using your camera behind your back.

- Flexibility

- Your camera can be accessed by multiple clients simultaneously.

- Libcamera support

- Needed for ARM devices or devices using ChromeOS

Chromium support

While support in WebRTC has been done already a few months ago, Chromium originally didn’t use WebRTC video capture API for camera support and for that reason it had to be added. Michael implemented it and it is still currently pending on review so currently both Chromium and Firefox are both implemented, but waiting for approval.

Future plans

Most importantly, I want to get everything merged and working seamlessly, but I’m already aware of some issues and missing functionality in the PipeWire backend in WebRTC. And we also have the same problem we used to have with screen sharing, which is that it’s not enabled by default, unit tested, and feature complete and these things take time fix.